Deep Learning Project – Face Recognition with Python & OpenCV

FREE Online Courses: Elevate Your Skills, Zero Cost Attached - Enroll Now!

Face Recognition with Python – Identify and recognize a person in the live real-time video.

In this deep learning project, we will learn how to recognize the human faces in live video with Python. We will build this project using python dlib’s facial recognition network. Dlib is a general-purpose software library. Using dlib toolkit, we can make real-world machine learning applications.

In this project, we will first understand the working of face recognizer. Then we will build face recognition with Python.

Face Recognition with Python, OpenCV & Deep Learning

About dlib’s Face Recognition:

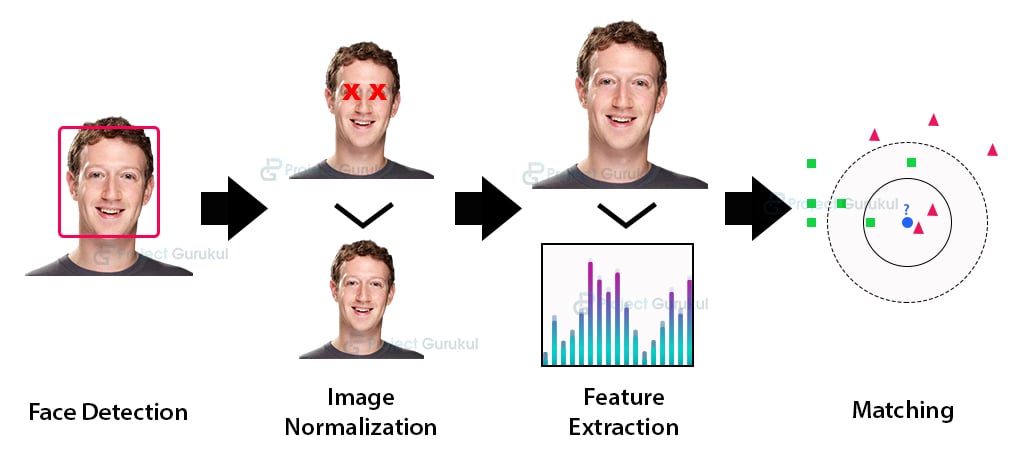

Python provides face_recognition API which is built through dlib’s face recognition algorithms. This face_recognition API allows us to implement face detection, real-time face tracking and face recognition applications.

Project Prerequisites:

You need to install the dlib library and face_recognition API from PyPI:

pip3 install dlib pip3 install face_recognition

Download the Source Code:

Steps to implement Face Recognition with Python:

We will build this python project in two parts. We will build two different python files for these two parts:

- embedding.py: In this step, we will take images of the person as input. We will make the face embeddings of these images.

- recognition.py: Now, we will recognize that particular person from the camera frame.

1. embedding.py:

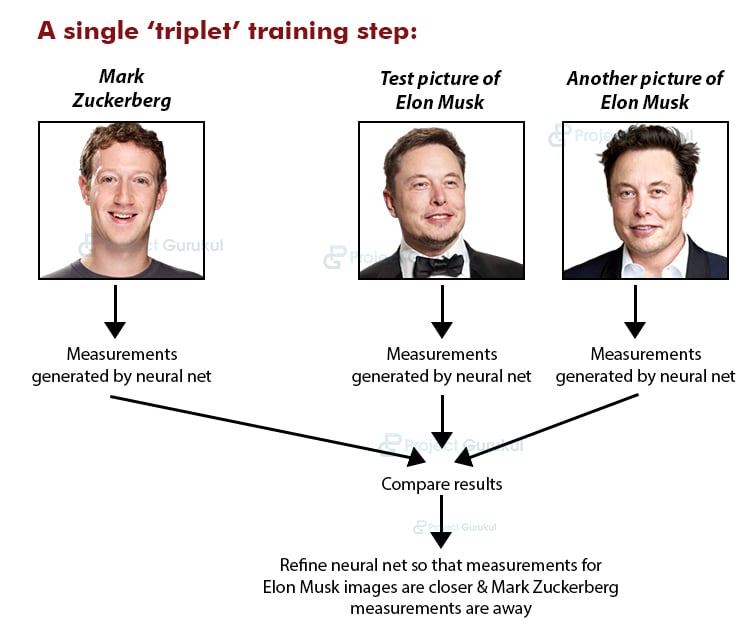

First, create a file embedding.py in your working directory. In this file, we will create face embeddings of a particular human face. We make face embeddings using face_recognition.face_encodings method. These face embeddings are a 128 dimensional vector. In this vector space, different vectors of same person images are near to each other. After making face embedding, we will store them in a pickle file.

Paste the below code in this embedding.py file.

- Import necessary libraries:

import sys import cv2 import face_recognition import pickle

- To identify the person in a pickle file, take its name and a unique id as input:

name=input("enter name")

ref_id=input("enter id")

- Create a pickle file and dictionary to store face encodings:

try:

f=open("ref_name.pkl","rb")

ref_dictt=pickle.load(f)

f.close()

except:

ref_dictt={}

ref_dictt[ref_id]=name

f=open("ref_name.pkl","wb")

pickle.dump(ref_dictt,f)

f.close()

try:

f=open("ref_embed.pkl","rb")

embed_dictt=pickle.load(f)

f.close()

except:

embed_dictt={}

- Open webcam and 5 photos of a person as input and create its embeddings:

Here, we will store the embeddings of a particular person in the embed_dictt dictionary. We have created embed_dictt in the previous state. In this dictionary, we will use ref_id of that person as the key.

To capture images, press ‘s’ five times. If you want to stop the camera press ‘q’:

for i in range(5):

key = cv2. waitKey(1)

webcam = cv2.VideoCapture(0)

while True:

check, frame = webcam.read()

cv2.imshow("Capturing", frame)

small_frame = cv2.resize(frame, (0, 0), fx=0.25, fy=0.25)

rgb_small_frame = small_frame[:, :, ::-1]

key = cv2.waitKey(1)

if key == ord('s') :

face_locations = face_recognition.face_locations(rgb_small_frame)

if face_locations != []:

face_encoding = face_recognition.face_encodings(frame)[0]

if ref_id in embed_dictt:

embed_dictt[ref_id]+=[face_encoding]

else:

embed_dictt[ref_id]=[face_encoding]

webcam.release()

cv2.waitKey(1)

cv2.destroyAllWindows()

break

elif key == ord('q'):

print("Turning off camera.")

webcam.release()

print("Camera off.")

print("Program ended.")

cv2.destroyAllWindows()

break

- Update the pickle file with the face embedding.

Here we store the embed_dictt in a pickle file. Hence, to recognize that person in future we can directly load its embeddings from this file:

f=open("ref_embed.pkl","wb")

pickle.dump(embed_dictt,f)

f.close()

Now it’s time to execute the first part of python project.

Run the python file and take five image inputs with the person’s name and its ref_id:

python3 embedding.py

2. recognition.py:

Here we will again create person’s embeddings from the camera frame. Then, we will match the new embeddings with stored embeddings from the pickle file. The new embeddings of same person will be close to its embeddings into the vector space. And hence we will be able to recognize the person.

Now, create a new python file recognition.py and paste below code:

- Import the libraries:

import face_recognition import cv2 import numpy as np import glob import pickle

- Load the stored pickle files:

f=open("ref_name.pkl","rb")

ref_dictt=pickle.load(f)

f.close()

f=open("ref_embed.pkl","rb")

embed_dictt=pickle.load(f)

f.close()

- Create two lists, one to store ref_id and other for respective embedding:

known_face_encodings = []

known_face_names = []

for ref_id , embed_list in embed_dictt.items():

for my_embed in embed_list:

known_face_encodings +=[my_embed]

known_face_names += [ref_id]

- Start the webcam to recognize the person:

video_capture = cv2.VideoCapture(0)

face_locations = []

face_encodings = []

face_names = []

process_this_frame = True

while True :

ret, frame = video_capture.read()

small_frame = cv2.resize(frame, (0, 0), fx=0.25, fy=0.25)

rgb_small_frame = small_frame[:, :, ::-1]

if process_this_frame:

face_locations = face_recognition.face_locations(rgb_small_frame)

face_encodings = face_recognition.face_encodings(rgb_small_frame, face_locations)

face_names = []

for face_encoding in face_encodings:

matches = face_recognition.compare_faces(known_face_encodings, face_encoding)

name = "Unknown"

face_distances = face_recognition.face_distance(known_face_encodings, face_encoding)

best_match_index = np.argmin(face_distances)

if matches[best_match_index]:

name = known_face_names[best_match_index]

face_names.append(name)

process_this_frame = not process_this_frame

for (top_s, right, bottom, left), name in zip(face_locations, face_names):

top_s *= 4

right *= 4

bottom *= 4

left *= 4

cv2.rectangle(frame, (left, top_s), (right, bottom), (0, 0, 255), 2)

cv2.rectangle(frame, (left, bottom - 35), (right, bottom), (0, 0, 255), cv2.FILLED)

font = cv2.FONT_HERSHEY_DUPLEX

cv2.putText(frame, ref_dictt[name], (left + 6, bottom - 6), font, 1.0, (255, 255, 255), 1)

font = cv2.FONT_HERSHEY_DUPLEX

cv2.imshow('Video', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

video_capture.release()

cv2.destroyAllWindows()

Now run the second part of the project to recognize the person:

python3 recognise.py

Summary:

This deep learning project teaches you how to develop human face recognition project with python libraries dlib and face_recognition APIs (of OpenCV).

It also covers the introduction to face_recognition API. We have implemented this python project in two parts:

- In the first part, we have seen how to store the information about human face structure, i.e face embedding. Then we learn how to store these embeddings.

- In the second part, we have seen how to recognize the person by comparing the new face embeddings with the stored one.

can you please specify the version of all packages used in the above code, as I am getting issues with the package versions I guess so..

Could u plz suggest any project ideas about python..?

Can you provide me with a real time face recognition and detection project if. Any unknown face is detected it will send alert via mail and what’s app

I’ve tried to follow so many face recognition tutorials and they are either confusing or overwhelming. This is so simple yet elegant, but most importantly–functional!

Thanks so much for this…

Can I use this to build a Smart mirror that generates a Hello, ‘name’! message when the face is recognized, if so, how can I customise the code to make it do this?

Getting this error while recognizing second face

Traceback (most recent call last):

File “C:\Users\Manish\Desktop\recognition.py”, line 94, in

cv2.putText(frame, ref_dictt[name], (left + 6, bottom – 6), font, 1.0, (255, 255, 255), 1)

KeyError: ‘Unknown’

sir how can we insert multiple data in the frame

How many people can this program recognize?

Hay dear,

First of all thanks for good sharing.

I am trying to applying your mentioned code and i am copying it as it is but experiencing error.

“File “C:\Users\nmirza\AppData\Local\Temp/ipykernel_4556/3460261835.py”, line 36

python3 embedding.py

^

SyntaxError: invalid syntax “”

Your prompt help is required brother in this regard .

Ton of Thanks in advance.

Regards,

Nauman

thank you so much for this awesome learning about face recognition in python

i am unable to achieve , could you please help me.

when I run this code it will show this type of error

File “c:\All about OpenCV\recognition.py”, line 34, in

ret, frame = video_capture.read()

NameError: name ‘video_capture’ is not defined

what shoud i do

i am getting eror in embedings

from _dlib_pybind11 import *

ModuleNotFoundError: No module named ‘_dlib_pybind11’

Hi all!!. This code is great and the program works perfect!!.

I added these lines of code, as whenever your run the face recognition.py file, and there is no match for a recognized face, it outputs, error “Uknown”, and the program terminates. The following lines prevents the program from stalling, and keeps the camera running, labeling the undetected face with a “Unidentified Person” label. I took the liberty to change the colors from the label, to background black, and font white.

if name == “Unknown”:

Unidientified_person = “Unidentified Person”

cv2.putText(frame, Unidientified_person, (left + 15, bottom – 15 ), font, 0.7, (255, 255, 255), 1)

These lines of code should go between:

font = cv2.FONT_HERSHEY_DUPLEX

font = cv2.FONT_HERSHEY_DUPLEX

Like this:

font = cv2.FONT_HERSHEY_DUPLEX

if name == “Unknown”:

Unidientified_person = “Unidentified Person”

cv2.putText(frame, Unidientified_person, (left + 15, bottom – 15 ), font, 0.7, (255, 255, 255), 1)

else:

cv2.putText(frame, ref_dictt[name], (left + 6, bottom – 6), font, 1.0, (255, 255, 255), 1)

font = cv2.FONT_HERSHEY_DUPLEX

————————————————————————————————————————-

I hope this is usefull to you guys!!.

Kind Regards!

Thank you so much 🙂

File “recognition.py”, line 61, in

matches = face_recognition.compare_faces(known_face_encodings, face_encoding)

File “/home/vipul/.local/lib/python3.8/site-packages/face_recognition/api.py”, line 226, in compare_faces

return list(face_distance(known_face_encodings, face_encoding_to_check) <= tolerance)

File "/home/vipul/.local/lib/python3.8/site-packages/face_recognition/api.py", line 75, in face_distance

return np.linalg.norm(face_encodings – face_to_compare, axis=1)

numpy.core._exceptions.UFuncTypeError: ufunc 'subtract' did not contain a loop with signature matching types (dtype('<U32'), dtype(' dtype(‘<U32')

i am getting this error after running recognition.py .please help

same error…. What can i do now ?

Hi Shivam,

embeddings.py worked fine. But I got the following error when I ran recognise.py

Traceback (most recent call last):

File “recognise.py”, line 45, in

best_match_index = np.argmin(face_distances,axis=0)

File “”, line 6, in argmin

File “C:\Users\raghottama\Anaconda3\envs\env_dlib\lib\site-packages\numpy\core\fromnumeric.py”, li

ne 1269, in argmin

return _wrapfunc(a, ‘argmin’, axis=axis, out=out)

File “C:\Users\raghottama\Anaconda3\envs\env_dlib\lib\site-packages\numpy\core\fromnumeric.py”, li

ne 58, in _wrapfunc

return bound(*args, **kwds)

ValueError: attempt to get argmin of an empty sequence

[ WARN:0] global C:\Users\appveyor\AppData\Local\Temp\1\pip-req-build-6uw63ony\opencv\modules\videoi

o\src\cap_msmf.cpp (434) `anonymous-namespace’::SourceReaderCB::~SourceReaderCB terminating async ca

llback

Let me know what’s wrong please,

Thank you

Never mind, solved it.

but after running recognise.py fps has been dropped. it’s not smooth.

Let me know what to do to bring it to normal fps.

Thank you

How did you solved your error?

like even for me the face distances is an empty sequence

Where should I run this brother..i mean can i run this in python 3.8.1 or in vs studio or vs code..kindly reply with every steps involved in creating this project

When I try to import “face_recognition” module, the following error shows up:

RuntimeError: Error while calling cudaGetDevice(&the_device_id) in file /tmp/pip-wheel-mmuzni47/dlib/dlib/cuda/gpu_data.cpp:201. code: 100, reason: no CUDA-capable device is detected.

What should I do to proceed?

while running the recognise.py file i get this error

File “C:/Users/preet/AppData/Local/Programs/Python/Python39/recognise.py”, line 39, in

best_match_index = np.argmin(face_distances)

File “”, line 5, in argmin

File “C:\Users\preet\AppData\Local\Programs\Python\Python39\lib\site-packages\numpy\core\fromnumeric.py”, line 1269, in argmin

return _wrapfunc(a, ‘argmin’, axis=axis, out=out)

File “C:\Users\preet\AppData\Local\Programs\Python\Python39\lib\site-packages\numpy\core\fromnumeric.py”, line 58, in _wrapfunc

return bound(*args, **kwds)

ValueError: attempt to get argmin of an empty sequence

why is this error and how to resolve this.

Wondering why it does not prompt me to enter id after I have inserted the name. Could you please help?

Hi, Im new to this . Can somebody tell me , when I should press s 5 times ? Its not working on the terminal

Thank you man for help with learning CV

While embedding first photo camera is not getting off. Th .pkl file got generate but camera id on only. Si What to do?

I got the solution

Whole embedding first photo camera is not getting off. What to do?

It’s amazing

module ‘face_recognition’ has no attribute ‘face_locations’

you can try updating the face_recognition module: pip3 install –upgrade –upgrade-strategy only-if-needed face_recognition

Its a great project to work on.Thanks a lot for sharing this.

If you guys would upload the project of face recognition with voice then it will be more fun.I would be so grateful if you will response to this.Please…

did u excute yourself have u got the result

When i only record one face with the embedding script everything works fine, but when when i add the second one the recognition script crash when it recognize the first one and this error shows with the id of the first face

cv2.putText(frame, ref_dictt[name], (left + 6, bottom – 6), font, 1.0, (255, 255, 255), 1)

KeyError: ‘001’

I might be able to help you if you elaborate your problem a bit

Dear Team,

Thanks for the program, While recognizing i’m receiving the error “f=open(“ref_embed.pkl”,”rb”) FileNotFoundError: [Errno 2] No such file or directory: ‘ref_embed.pkl’. Can you please check and advise , in the execution path ref_name.pkl file is available.

Thanks & Regards,

Balaji Dharani

Hi Balaji,

I think you are getting this error because you probably may have saved the “embed_dictt” dictionary in “ref_embed.pkl” pickle file.

Check the last point in embedding.py , I think you have not executed the following lines:

f=open(“ref_embed.pkl”,”wb”)

pickle.dump(embed_dictt,f)

f.close()

Please change the file name from “ref_name.pkl” to “ref_embed.pkl”